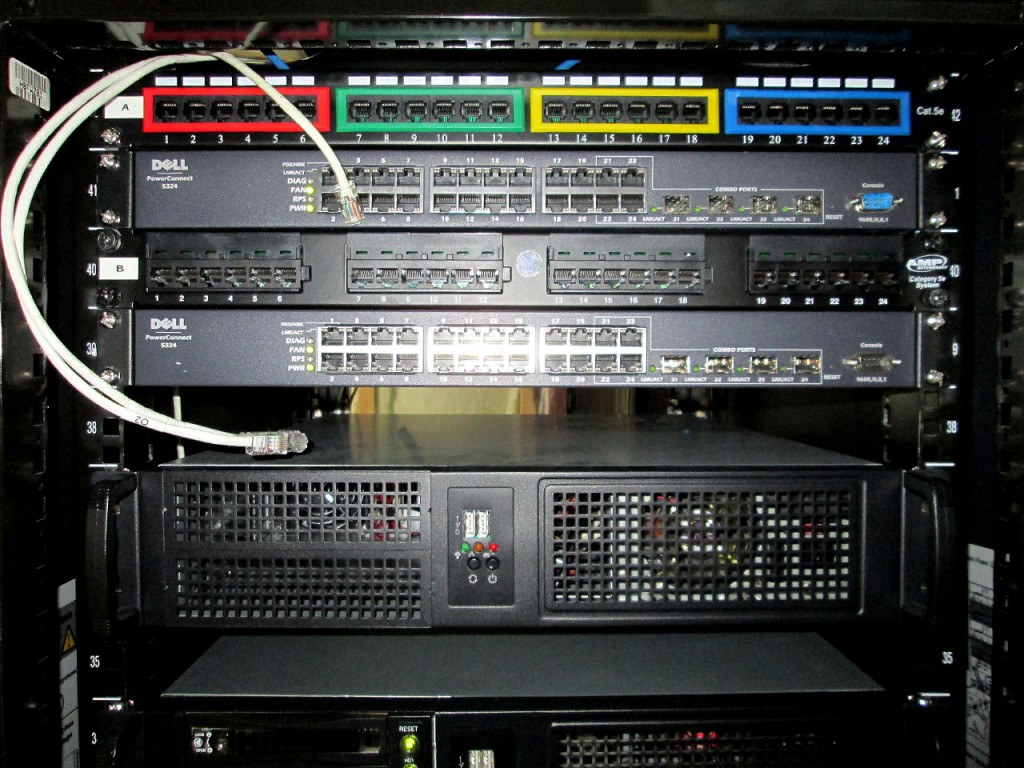

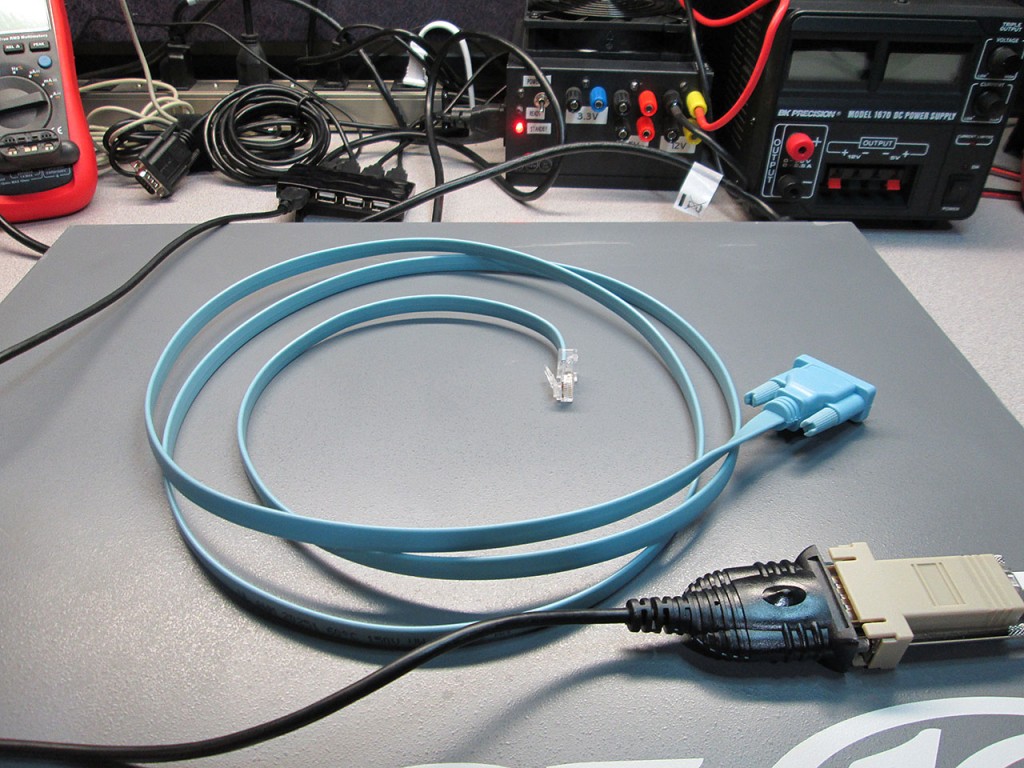

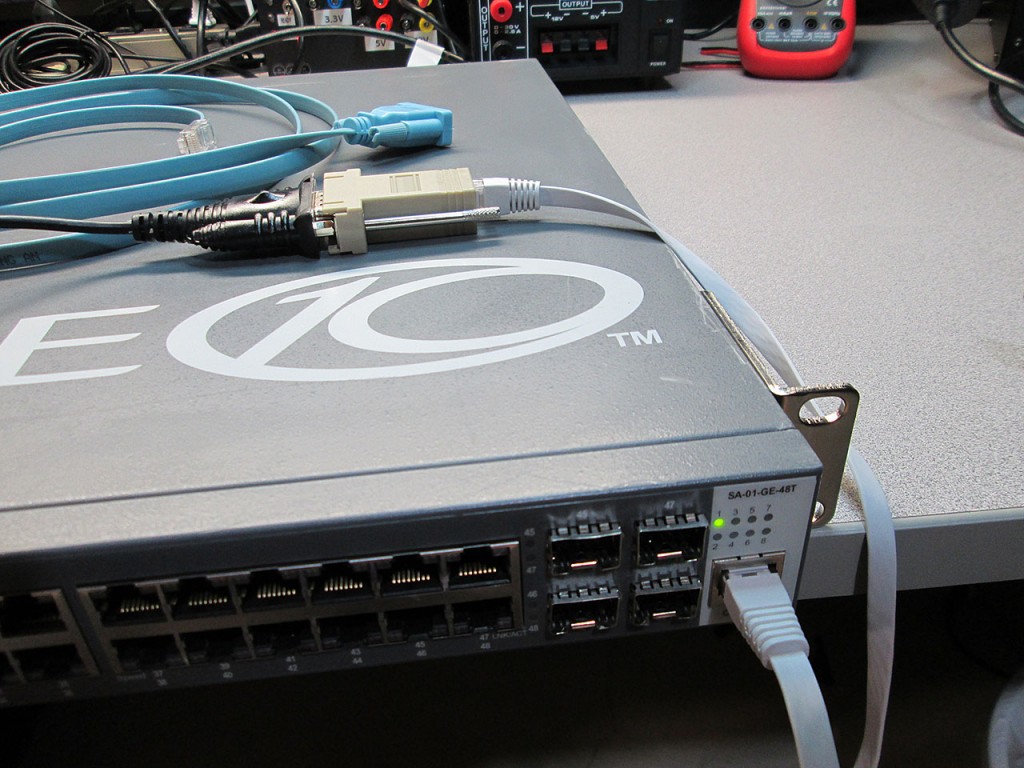

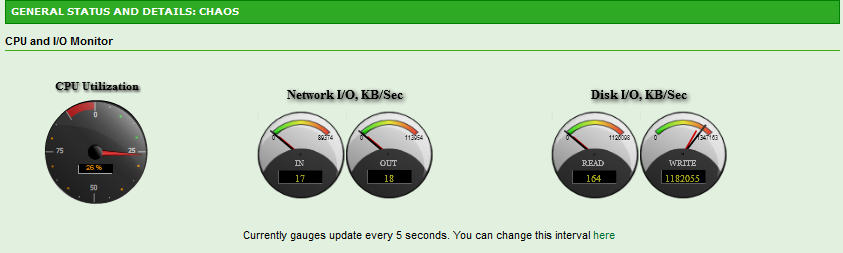

A while ago I build a new NexentaStor server to serve as the home lab SAN. Also picked up a low latency Force10 switch to handle the SAN traffic (among other things).

Now the time came to test vSphere iSCSI MPIO and attempt to achieve faster than 1Gb/s connection to the datastore which has been a huge bottleneck when using NFS.

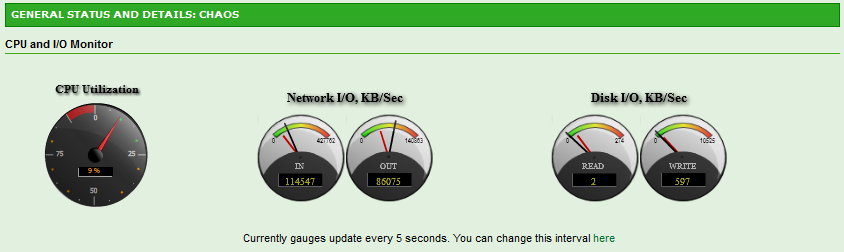

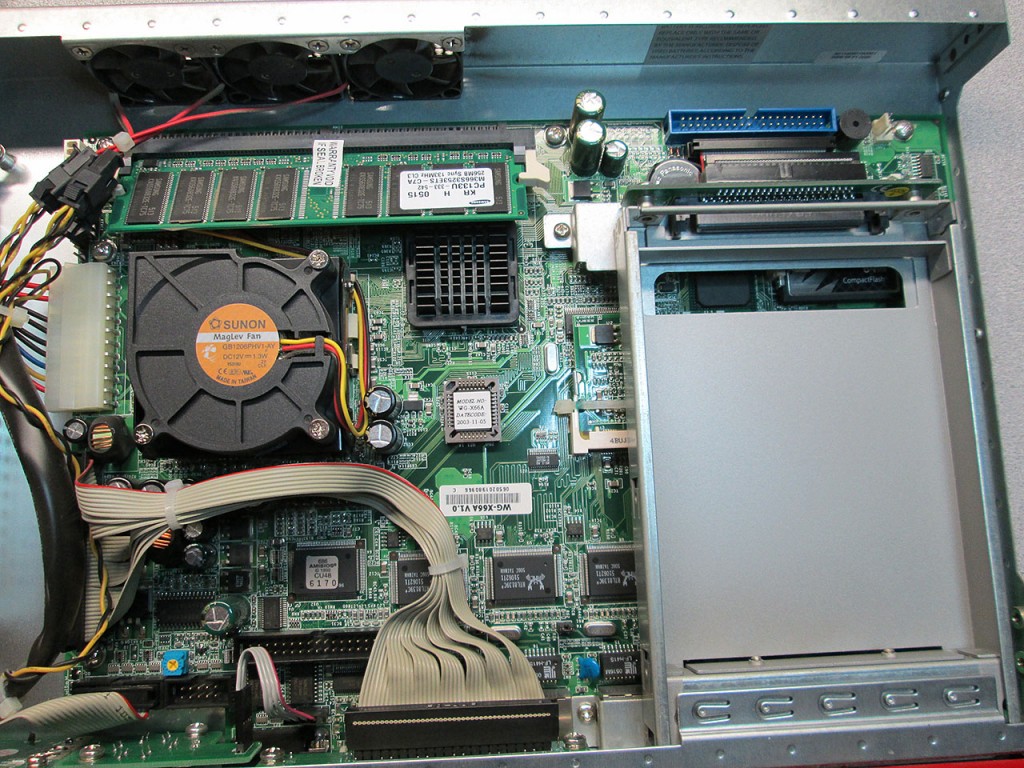

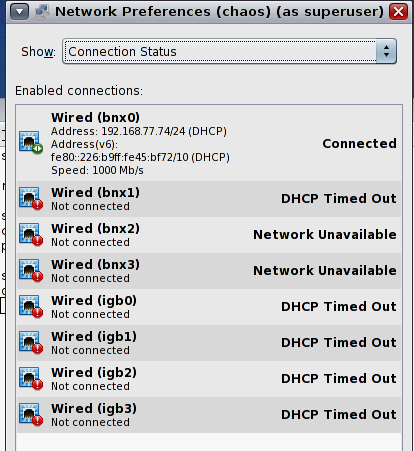

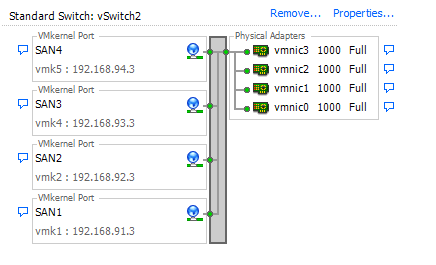

The setup on each machine is as follows.

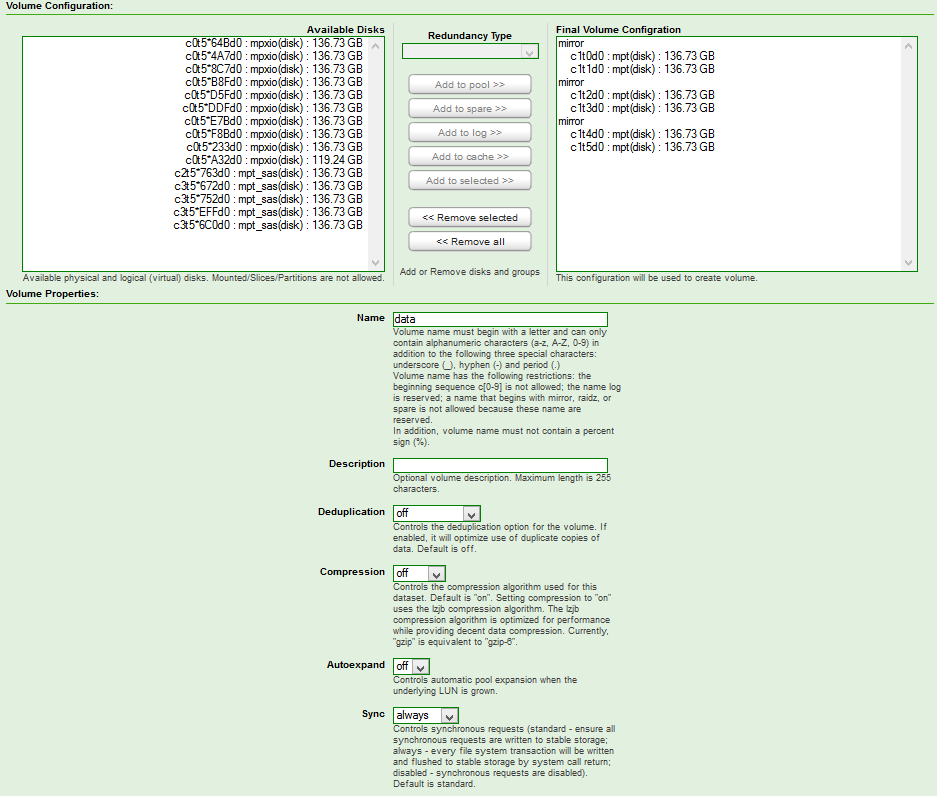

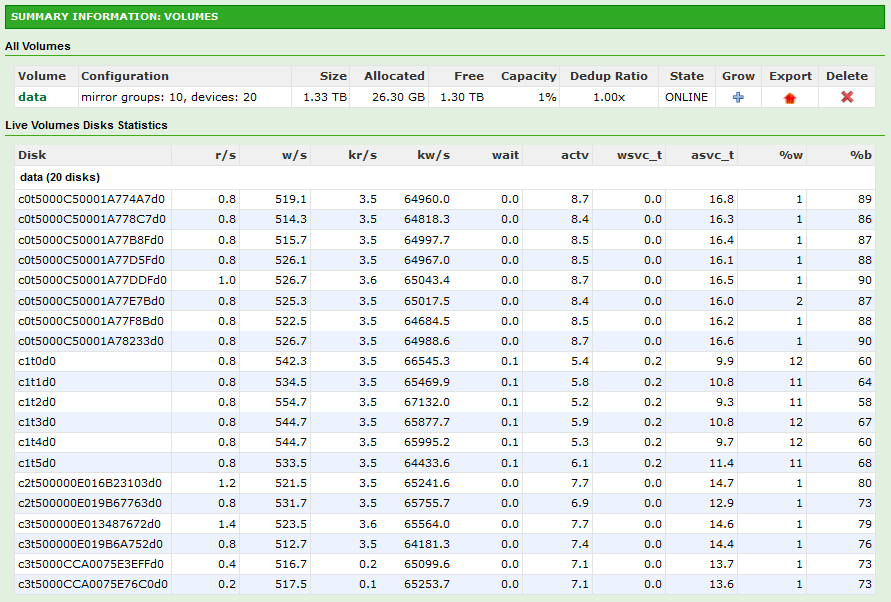

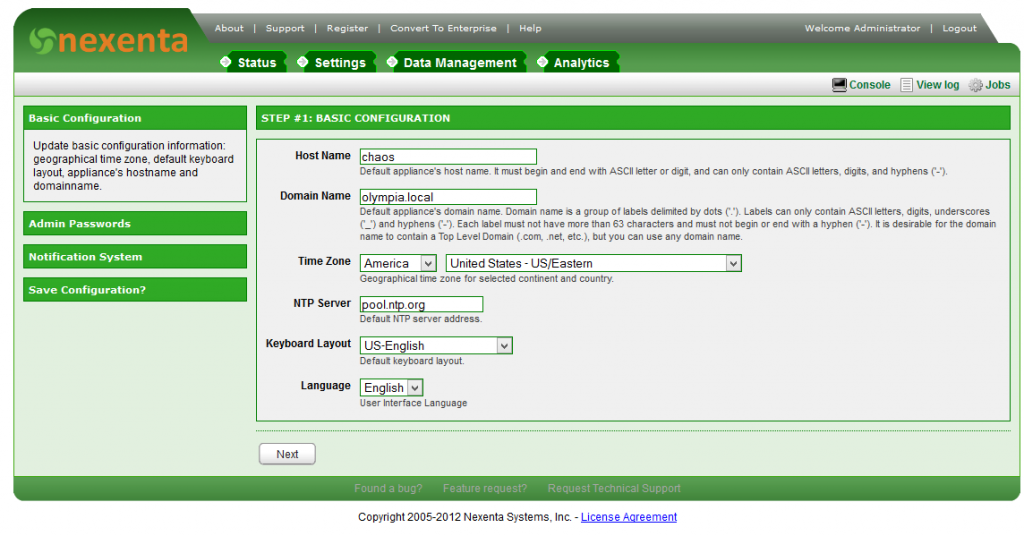

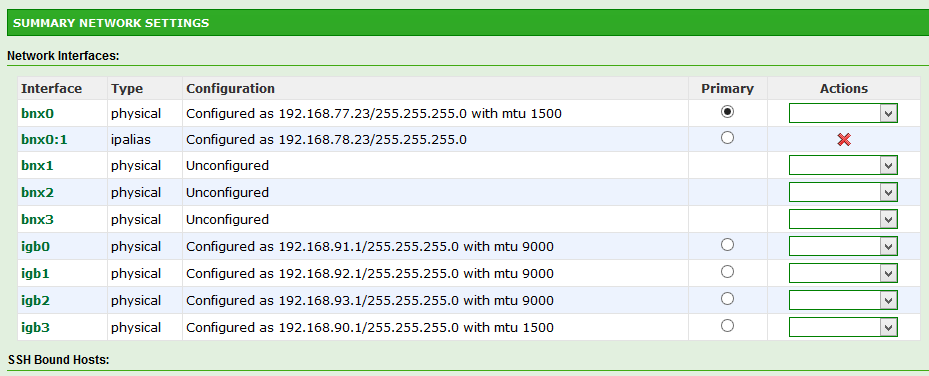

NexentaStor

- 4 Intel Pro/1000 VT Interfaces

- Each NIC on separate VLAN

- Naggle disabled

- Compression on Target enabled

vSphere

- 4 On-Board Broadcom Gigabit interfaces

- Each NIC on separate VLAN

- Round Robin MPIO

- Balancing: IOPS, 1 IO per NIC

- Delayed Ack enabled

- VM test disk Eager Thick Provisioned

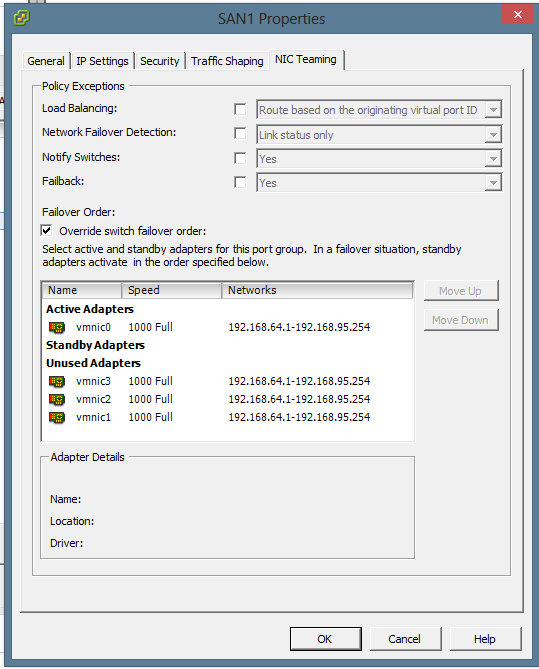

Network on vSphere was configured via a single vSwitch though pNICs were assigned individually to each vNIC.

Round robin balancing was configured via vSphere and changed the IOPS per NIC via the console

~ # esxcli storage nmp psp roundrobin deviceconfig set --device naa.600144f083e14c0000005097ebdc0002 --iops 1 --type iops

~ # esxcli storage nmp psp roundrobin deviceconfig get -d naa.600144f083e14c0000005097ebdc0002

Byte Limit: 10485760

Device: naa.600144f083e14c0000005097ebdc0002

IOOperation Limit: 5

Limit Type: Iops

Use Active Unoptimized Paths: false

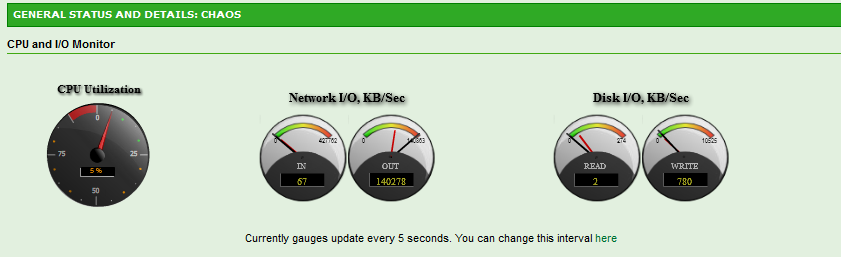

Testing was done inside a CentOS VM because for some reason testing directly in vSphere Console only results in maximum transfer of 80MB/s even though the traffic was always split evenly across all 4 interfaces.

Testing was done via DD commands

[root@testvm test]# dd if=/dev/zero of=ddfile1 bs=16k count=1M

[root@testvm test]# dd if=ddfile1 of=/dev/null bs=16k

The initial test was done with what I thought was the ideal scenario.

| NexentaStor MTU | vSphere MTU | VM Write | VM Read |

| 9000 | 9000 | 394 MB/s | 7.4 MB/s |

What the? 7.4 MB/s reads? Repeated the test several times to confirm. Even tried it on another vSphere server and new Test VM. Doing some Googling it might be MTU mismatch so let’s try with standard 1500 MTU.

| NexentaStor MTU | vSphere MTU | VM Write | VM Read |

| 1500 | 1500 | 367 MB/s | 141 MB/s |

A bit of loss in write speed due to smaller MTU but for some reason reads are only maxed at 141MB/s. Much faster than MTU 9000 but nowhere near the write speeds. Definitely MTU issue at work when using Jumbos even though the fact that it’s limited to 141MB/s in reads still doesn’t make sense. The traffic was still evenly split across all interfaces. Trying to match up the MTU’s better. Could it be that either NexentaStor or vSphere doesn’t account for the TCP header?

| NexentaStor MTU | vSphere MTU | VM Write | VM Read |

| 8982 | 8982 | 165 MB/s | ? MB/s |

Had to abort the read test as it seemed to have stalled completely. During writes the speeds flactuated wildly. Yet Another test.

| NexentaStor MTU | vSphere MTU | VM Write | VM Read |

| 9000 | 8982 | 356 MB/s | 4.7 MB/s |

| 8982 | 9000 | 322 MB/s | ? MB/s |

Once again had to abort reads due to stalled test. Not sure what’s going on here. But for giggles, decided to try another uncommon MTU size of 7000.

| NexentaStor MTU | vSphere MTU | VM Write | VM Read |

| 7000 | 7000 | 417 MB/s | 143 MB/s |

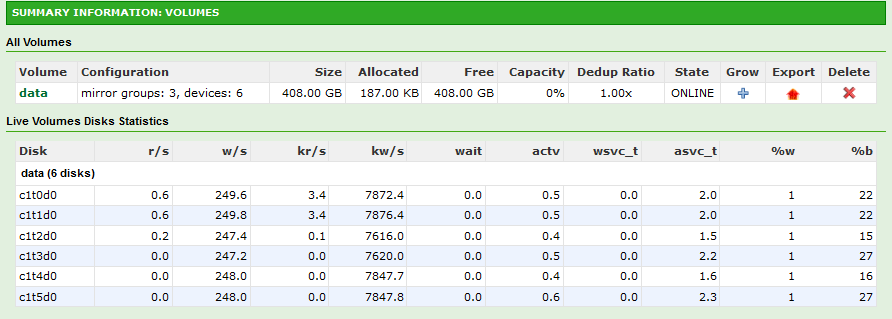

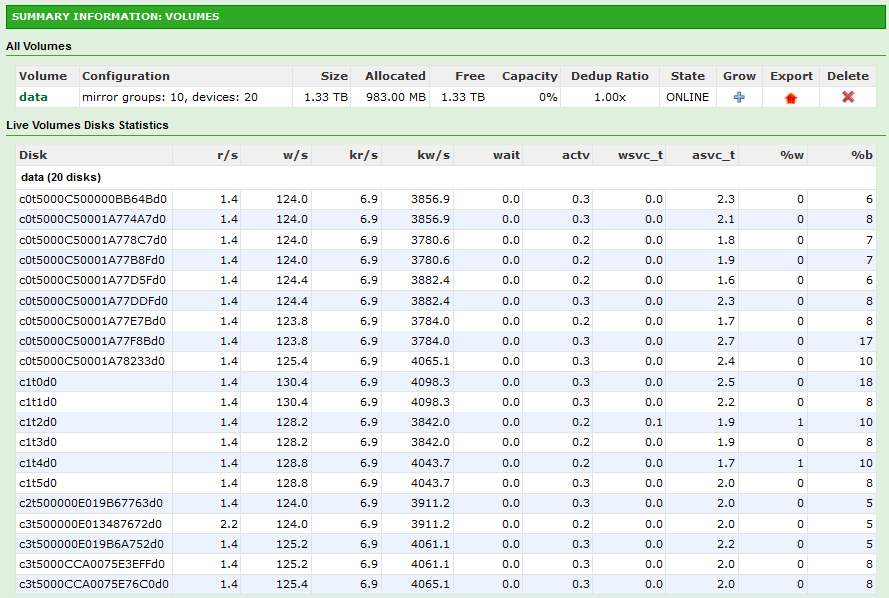

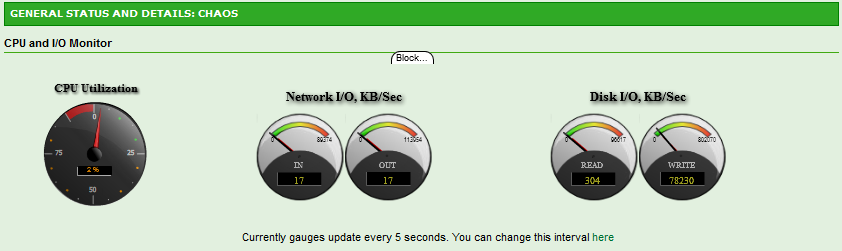

Hmm. Very unusual. Not exactly sure what the bottleneck here is. Still, definitely faster than single 1Gb NIC. Disk on the SAN is definitely not the issue as the IO never actually hits the physical Disk.

Another quick test was done by copying a test file to another via DD. The results were also quite surprising.

[root@testvm test]# dd if=ddfile1 of=ddfile2 bs=16k

This is another one I didn’t expect. The result was only 92MB/s which is less than the speed of a single NIC. At this point I spawned another test VM to test concurrent IO performance.

The same test repeated concurrently on two VM’s resulted in about 68MB/s each. Definitely not looking too good.

Performing a pure DD read on each VM did however achieve 95MB/s per VM so the interfaces are better utilized. Repeating the tests with MTU 1500 resulted in 77MB/s (copy) and 133MB/s (pure read).

Conclusion: Jumbo Frames at this point do not offer any visible advantage. For stability sake sticking with MTU 1500 sounds like the way to go. Further research required.