A while ago I picked up a couple of Intel SR1680MV 1U Servers from Kijiji for next to nothing. Each server contains two nodes that are completely standalone systems.

Server 1: 2 Nodes

Each Node:

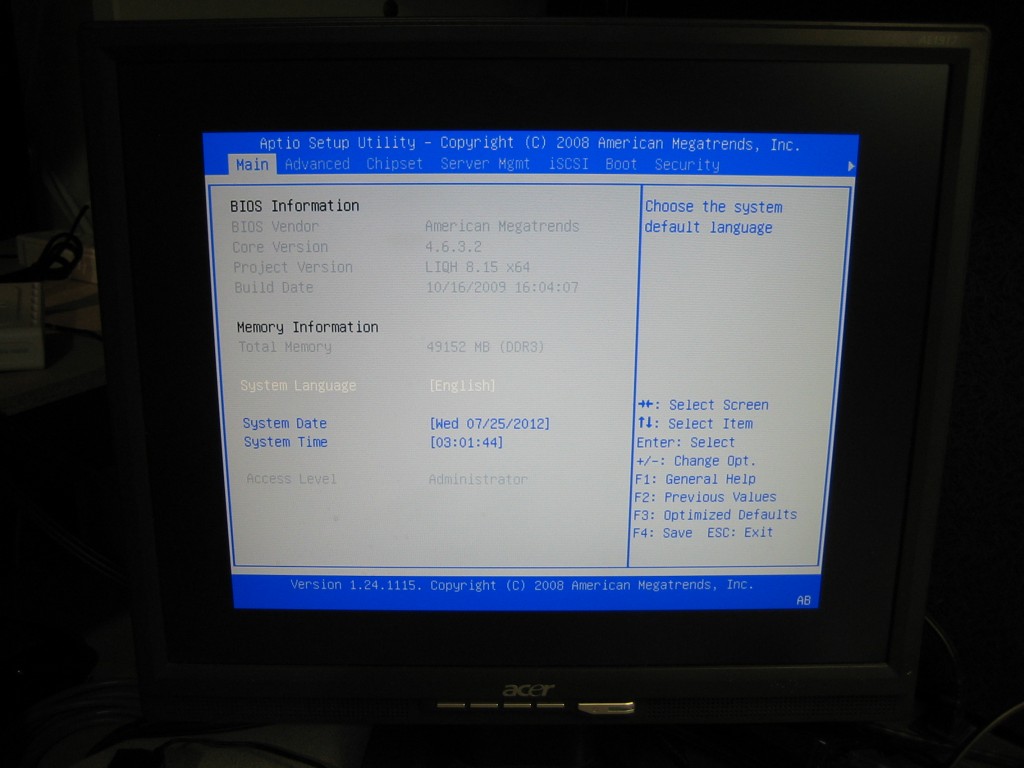

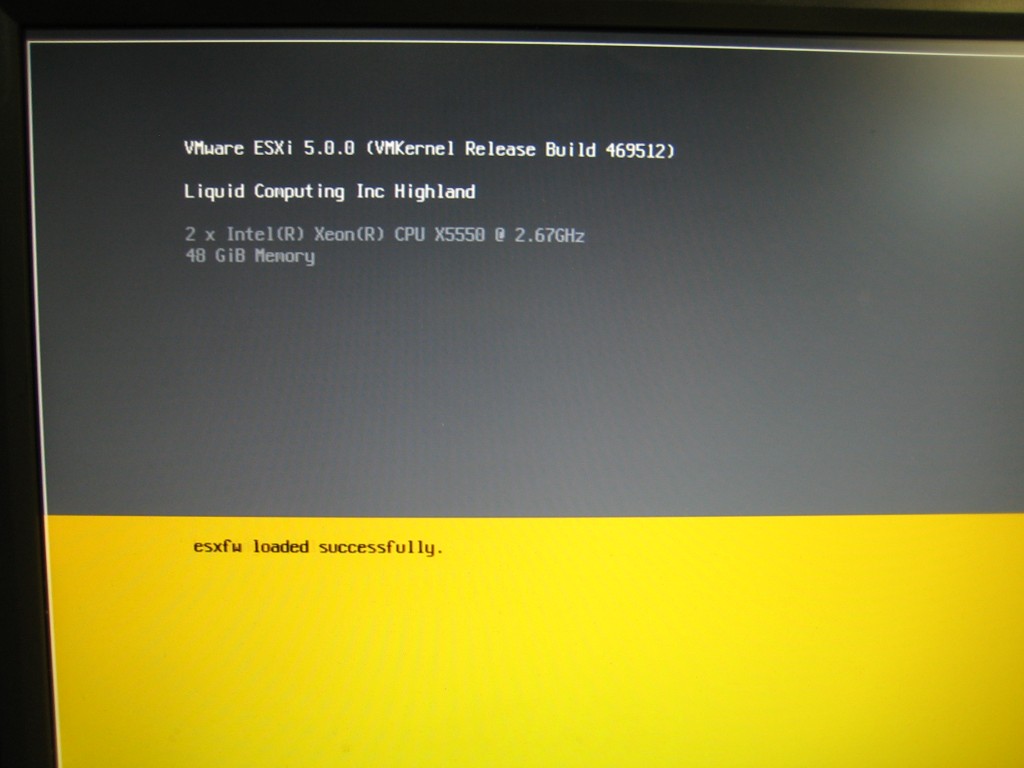

CPU: 2x Intel X5550 Quad Core HT (8 Threads)

RAM: 48GB DDR3 1066

HD: None

Server 2: 2 Nodes

Each Node:

CPU: 2x Intel E5520 Quad Core HT (8 Threads)

RAM: 72GB DDR3 1066

HD: 2x 2.5″ 256GB SATA

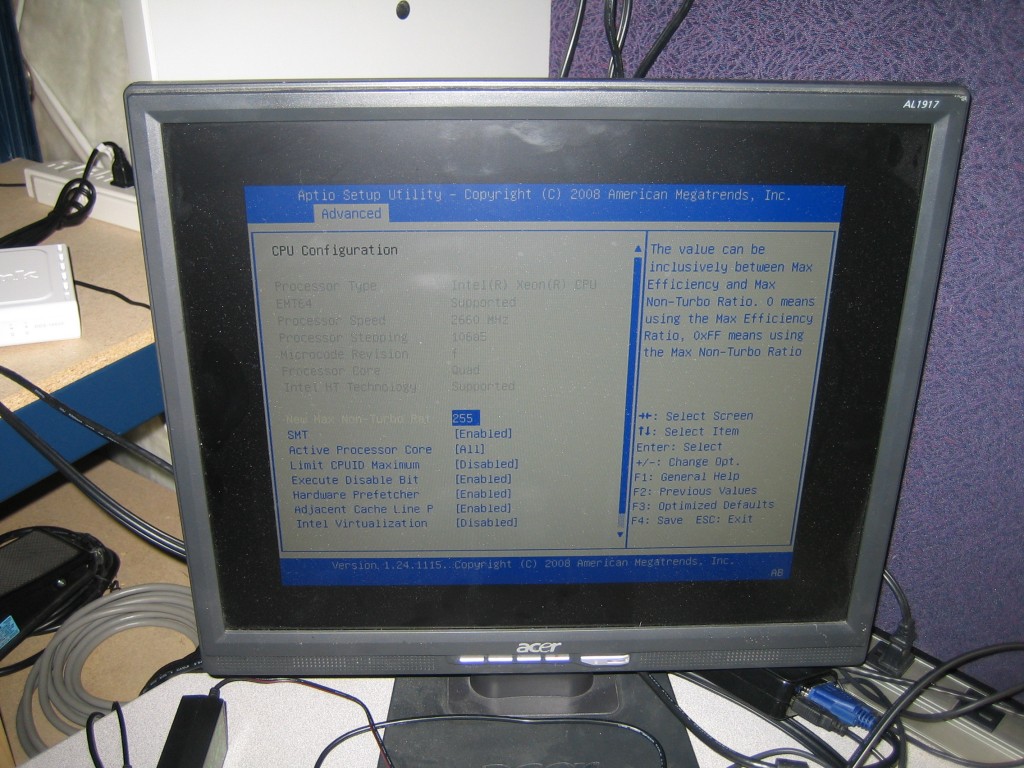

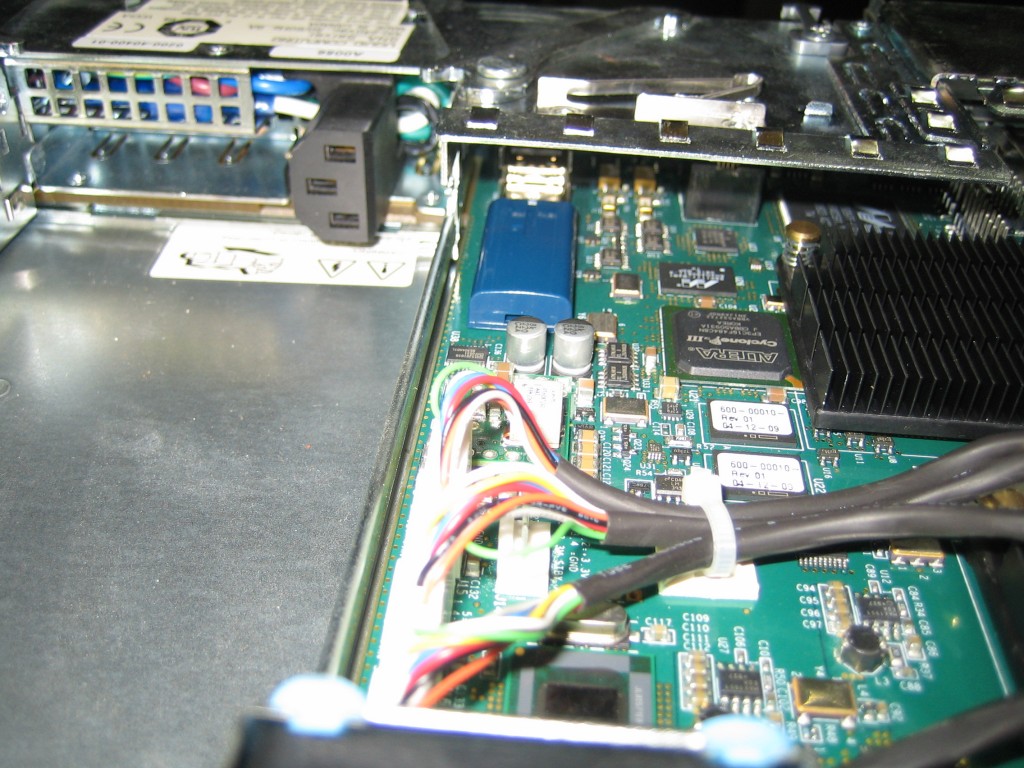

From what I can gather these systems are modified versions of the normal SR1680 server by now defunct company named Liquid Computing out of Ottawa. I’ve tried digging up more info on these servers as they use some kind of proprietary backplane with a 10Gbe interface. There’s expandable on-board memory slot, several pin headers, RJ45 connectors and some large processors covered by massive heatsinks which I haven’t had a chance to look at yet. I’d be really interesting to find out what all this hardware was capable of.

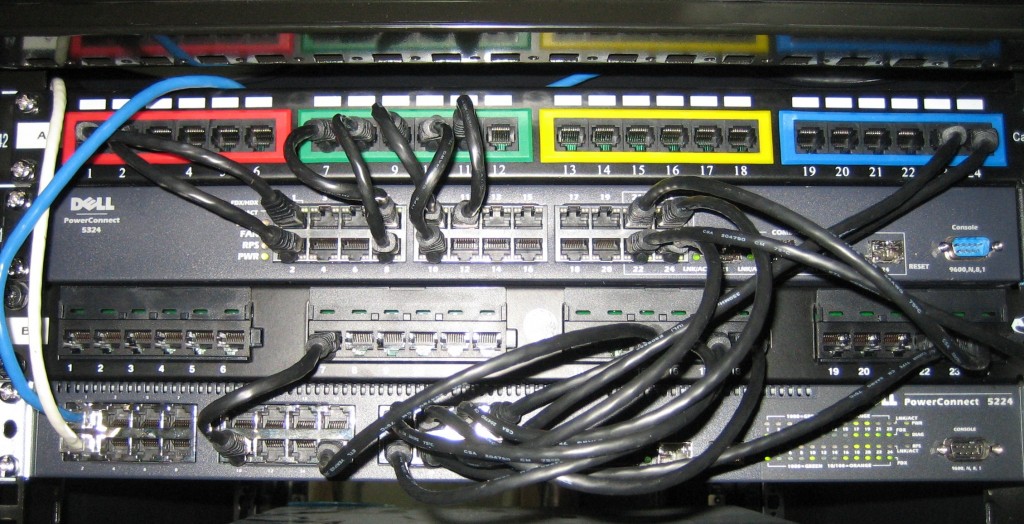

One of the servers will end up at a datacenter for hosting purposes, the other one I’ll keep at home rack. Because the backplane is of no real use to me, I’ll use Intel quad NICs to add networking to the nodes.

I’ve also ran into a small snag with the USB sticks I bought. They were physically too thick to have both fit into adjacent slots in the internal USB connector. I ended up removing the outer sleeve and it worked like a charm. Odd design to have a single USB header that provides USB storage to two separate nodes.

Installing vSphere went without a hitch. What I found really interesting is that the 10Gbe backplane interface is actually shared across the two nodes. Each vSphere instance sees 4 Nics with the exact same MACs.

To Be Continued…